本文字数:约 6750 字,预计阅读时间:22 分钟

Anthropic says it solved the long-running AI agent problem with a new multi-session Claude SDK

Agent memory remains a problem that enterprises want to fix, as agents forget some instructions or conversations the longer they run. Anthropic believes it has solved this issue for its Claude Agent SDK, developing a two-fold solution that allows an agent to work across different context windows.

The core challenge of long-running agents is that they must work in discrete sessions, and each new session begins with no memory of what came before. Because context windows are limited, and because most complex projects cannot be completed within a single window, agents need a way to bridge the gap between coding sessions.

Anthropic engineers proposed a two-fold approach for its Agent SDK: An initializer agent to set up the environment, and a coding agent to make incremental progress in each session and leave artifacts for the next. The initializer agent sets up the environment, logging what agents have done and which files have been added. The coding agent will then ask models to make incremental progress and leave structured updates.

The failures manifested in two patterns, Anthropic said. First, the agent tried to do too much, causing the model to run out of context in the middle. The agent then has to guess what happened and cannot pass clear instructions to the next agent. The second failure occurs later on, after some features have already been built. The agent sees progress has been made and just declares the job done.

This is where the two-part solution of Anthropic's agent comes in. The initializer agent sets up the environment, logging what agents have done and which files have been added. The coding agent will then ask models to make incremental progress and leave structured updates.

Anthropic noted that its approach is “one possible set of solutions in a long-running agent harness.” However, this is just the beginning stage of what could become a wider research area for many in the AI space. The company said its experiments to boost long-term memory for agents haven’t shown whether a single general-purpose coding agent works best across contexts or a multi-agent structure. Its demo also focused on full-stack web app development, so other experiments should focus on generalizing the results across different tasks.

Anthropic's efforts to enhance agent memory are crucial for consistent and reliable performance in enterprise applications. The solutions proposed by Anthropic can help bridge the gap between context windows and agent memory, enabling AI agents to perform more complex and longer-term tasks effectively. This development could be a significant step towards making AI agents more reliable and useful in various business contexts.

What to be thankful for in AI in 2025

This year has felt like living inside a permanent DevDay. Every week, some lab drops a new model, a new agent framework, or a new “this changes everything” demo. It’s overwhelming. But it’s also the first year I’ve felt like AI is finally diversifying — not just one or two frontier models in the cloud, but a whole ecosystem: open and closed, giant and tiny, Western and Chinese, cloud and local.

- OpenAI kept shipping strong: GPT-5, GPT-5.1, Atlas, Sora 2 and open weights

- China’s open-source wave goes mainstream

- Small and local models grow up

- Meta + Midjourney: aesthetics as a service

- Google’s Gemini 3 and Nano Banana Pro

OpenAI continued to push the frontier with GPT-5 and GPT-5.1, providing new variants and enhancing the capabilities of AI models. The company’s latest models have been integrated into various applications, including customer service platforms where they have shown significant improvements in resolving customer queries. China has also contributed significantly to the open-source AI ecosystem, with models like DeepSeek-R1 and Qwen3 gaining traction. These models are contributing to the diversity of the AI landscape, making AI more accessible and adaptable for various applications.

The development of small and efficient AI models, such as Liquid AI’s LFM2 and Google’s Gemma 3, is another area to be thankful for. These models are designed for low-latency and device-aware deployments, making AI more accessible for privacy-sensitive and offline workflows. Meta’s partnership with Midjourney to integrate aesthetic technology into its products is also noteworthy, as it will bring high-quality visuals to mainstream social tools.

Google’s Gemini 3 and Nano Banana Pro have introduced new capabilities in reasoning, coding, and multimodal understanding, while Nano Banana Pro specializes in generating infographics and diagrams. These developments signify the maturation and diversification of AI technologies, reflecting a shift from a single dominant model to a rich ecosystem of models and applications.

捐赠自研OS内核背后:Rust 先行者 vivo 的「担当」

人工智能时代,开源成为一个重要发展方向。随着 AI 开始逐步进入到现实世界,全新的 AI 原生硬件设备对连接底层硬件与顶层应用的操作系统提出了新的需求。vivo 自研操作系统——蓝河操作系统(BlueOS),从内核到系统框架全栈使用 Rust 语言编写,它在安全、AI 能力和运行流畅方面的优秀特性,能够很好地满足 AI 原生硬件设备对操作系统的要求。

BlueKernel 是 vivo 以 Rust 语言自研的操作系统内核,具备安全、轻量和通用的核心特性。vivo 开源的是操作系统内核,它是操作系统的「心脏」,这让底层的硬件厂商、专业的系统开发者、开源社区,都可以基于 BlueKernel 进行创新。

从开源并捐献操作系统内核,到办「创新赛」,vivo 持续为产业做贡献,不断推动整个行业的繁荣和发展。它开源的操作系统内核 BlueKernel,为 AI 眼镜、机器人等 AI 原生硬件提供了一个安全、通用的强大「心脏」。

通过捐赠自研的 BlueKernel,vivo 不仅展现了其在 Rust 语言技术研究和应用上的领先性,还为 AI 原生硬件设备提供了安全、轻量、通用的内核支持。此举有助于推动 AI 操作系统生态的发展,促进技术的创新和应用,为行业带来积极的推动作用。

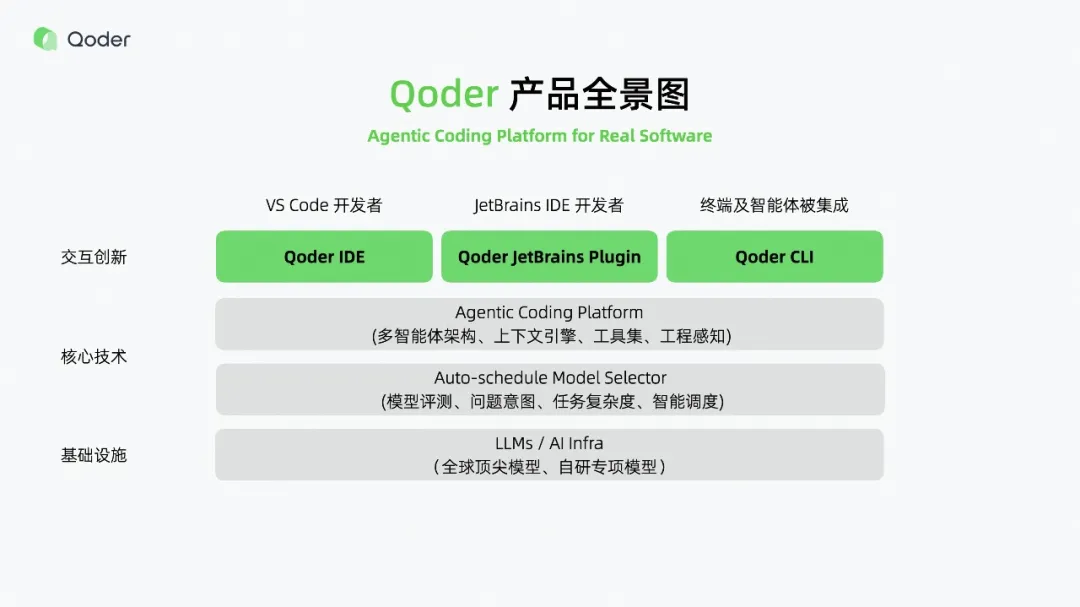

从代码补全到真实软件的生产级工具:Qoder 如何改写 AI 编程规则

Qoder 作为国内首个定位为「Agentic Coding(智能体编程)平台」的 AI 开发工具,标志着 AI 编程从「代码助手」向「可自主完成复杂任务的全栈 AI 工程师」的重大进化。Qoder 的突破在于其上下文工程能力,能够覆盖广度、检索精度、意图匹配三大瓶颈,提供从文件级读取到项目级/工程级理解的能力。

Qoder 通过动态记忆和一键增强双机制解决意图匹配问题,并支持 Repo Wiki 的导出与共享,确保 AI 的上下文认知与团队经验同步。此外,Qoder 还引入了 Quest 模式和 Spec 驱动的核心理念,实现 Agent 能力的可控可追溯。通过这些创新,Qoder 成为生产级代码生成的重要工具,推动了 AI 编程工具的实用化和专业化。

视频理解霸榜!快手Keye-VL旗舰模型重磅开源,多模态视频感知领头羊

快手 Keye-VL 旗舰模型在多模态视频理解任务上取得了突破性的进展,其开源为行业提供了强大的视频感知能力。该模型在多个视频理解任务上表现优异,能够识别和理解视频中的文本、图像、声音等多种信息,提升了视频内容的分析和应用能力。快手 Keye-VL 的开源不仅展示了其在视频理解领域的领先地位,也为更多开发者和研究者提供了宝贵的研究资源和技术支持。

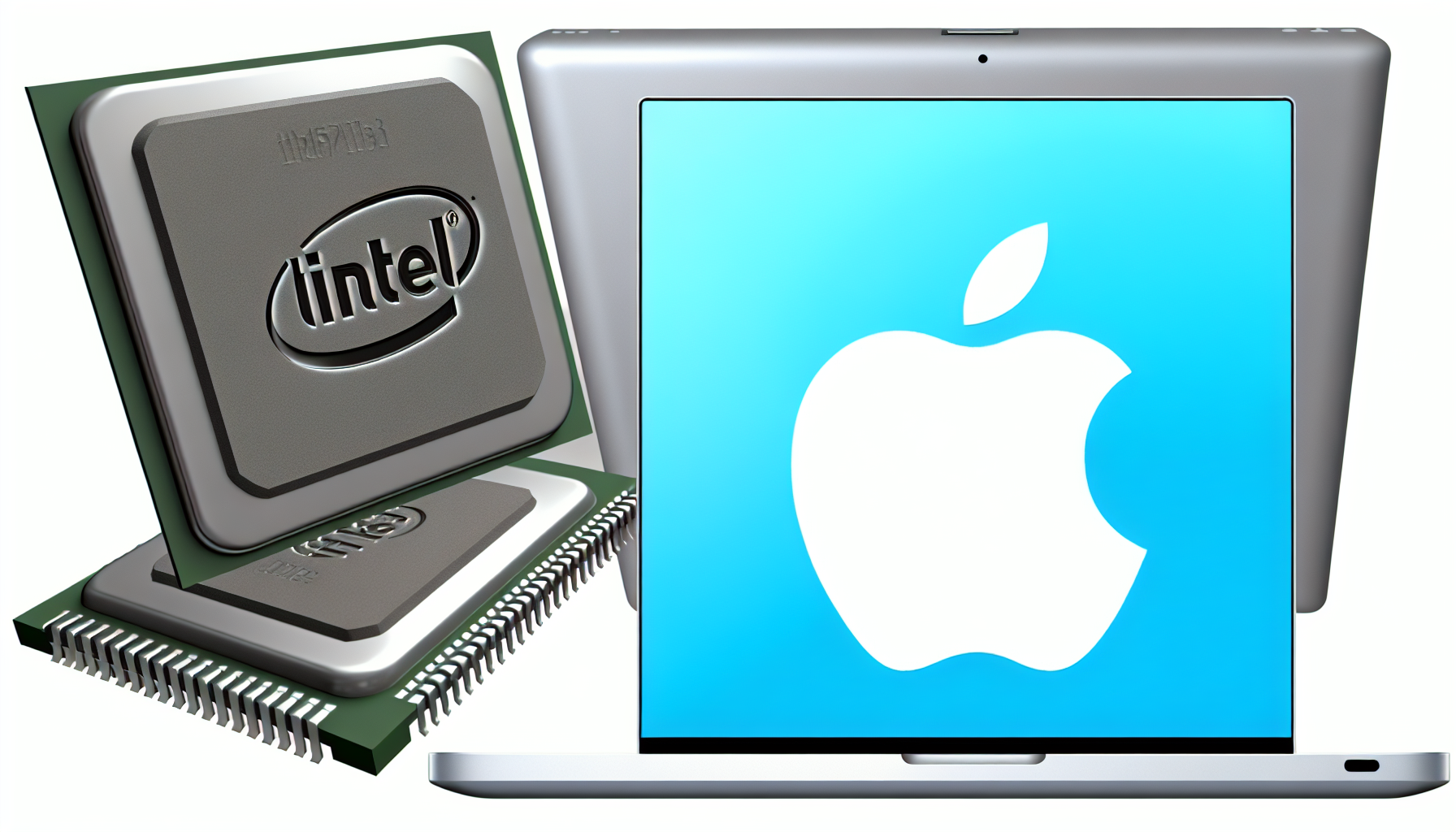

Intel Stock Soars 10% after Reliable Analyst Sees Delivery of Apple's Mac Chips in 2027

著名分析师 Ming-Chi Kuo 预测,Intel 将在 2027 年开始为 Apple 的 Mac 电脑提供芯片。这一预测促使 Intel 股价上涨 10%。Intel 作为 Apple 的芯片供应商,将进一步加强其在高端计算市场的地位,同时也为 Intel 带来了新的发展机遇。此次合作不仅为 Intel 提供了新的市场机会,也反映了 Intel 在先进制程技术上的进步和竞争力。

HUAWEI Mate 80系列发布:以巅峰科技树立高端旗舰新标杆

HUAWEI Mate 80 系列的发布标志着华为在高端智能手机市场的新一轮竞争。该系列手机采用了先进的科技,包括强大的处理器、高分辨率屏幕和创新的摄影技术,树立了高端旗舰的新标杆。Mate 80 系列不仅展示了华为在智能手机技术上的领先地位,也为消费者提供了更多高端选择,推动了智能手机市场的技术升级。

"智驾普及元年"年终大考:奇瑞猎鹰智驾的承诺兑现了吗?

奇瑞猎鹰智驾作为智能驾驶领域的重要实践,其在技术应用和市场推广方面的进展,不仅反映了奇瑞在智能化战略上的努力,也体现了中国智能驾驶行业的发展水平。通过这一实践,奇瑞展示了其在智能驾驶技术上的实力,也为行业从概念走向实用提供了参考和借鉴。猎鹰智驾的成功与否,将直接影响到奇瑞在未来智能驾驶市场的竞争力。

奕东电子6120万并购深圳冠鼎,业绩承压下加码液冷业务|并购一线

奕东电子以 6120 万元收购深圳冠鼎,旨在加强其在液冷业务上的布局。液冷技术在数据中心和高性能计算领域具有重要应用价值,此次并购将有助于奕东电子提升在液冷市场的竞争力。面对业绩压力,奕东电子通过并购加码液冷业务,展现了其在新兴技术领域的积极布局,为公司未来的发展提供了新的动力和方向。

高溢价“亲情并购”未设减值承诺,元力股份七度并购再谋突围|并购一线

元力股份进行的高溢价并购未设减值承诺,展示了其在并购策略上的独特考量。尽管此次并购未设置减值承诺,但元力股份希望通过并购实现业务拓展和市场突围。元力股份的连续并购反映了其在面对市场挑战时的积极应对策略,也体现了企业通过并购实现资源整合和业务升级的努力。

争夺下一个“超级入口”,阿里和字节必有一战

阿里巴巴和字节跳动在争夺下一个“超级入口”的竞争中,展现了各自在技术和市场的战略布局。无论是通过 AI 技术还是平台生态,两家企业都在努力构建新的流量入口,以期在未来市场竞争中占据优势地位。这场竞争不仅反映了两家公司在技术和市场上的激烈对抗,也体现了科技巨头对未来发展方向的前瞻性和战略布局。

总结

在2025年的AI领域,开源和技术创新仍然是主要趋势。从Anthropic解决AI代理内存问题,到OpenAI持续推出新模型,再到vivo开源Rust内核,以及Qoder的智能体编程平台,这些事件展示了AI技术在解决实际问题、推动行业进步和促进开源生态发展方面的积极作用。这些进展不仅提升了AI系统的性能和可靠性,也为开发者和企业提供更多选择,推动了AI技术的广泛应用和创新。

作者:Qwen/Qwen2.5-32B-Instruct

文章来源:VentureBeat, 极客公园, 钛媒体, 量子位, 机器之心

编辑:小康