本文字数:约 5449 字,预计阅读时间:18 分钟

Vibe coding platform Cursor releases first in-house LLM, Composer, promising 4X speed boost

The vibe coding tool Cursor, from startup Anysphere, has introduced Composer, its first in-house, proprietary coding large language model (LLM) as part of its Cursor 2.0 platform update. Composer is designed to execute coding tasks quickly and accurately in production-scale environments, representing a new step in AI-assisted programming. It's already being used by Cursor’s own engineering staff in day-to-day development, indicating maturity and stability.

Composer completes most interactions in less than 30 seconds while maintaining a high level of reasoning ability across large and complex codebases. The model is described as four times faster than similarly intelligent systems and is trained for "agentic" workflows—where autonomous coding agents plan, write, test, and review code collaboratively.

Previously, Cursor supported "vibe coding"—using AI to write or complete code based on natural language instructions from a user, even someone untrained in development—on top of other leading proprietary LLMs from the likes of OpenAI, Anthropic, Google, and xAI. These options are still available to users.

Composer’s capabilities are benchmarked using "Cursor Bench," an internal evaluation suite derived from real developer agent requests. The benchmark measures not just correctness, but also the model’s adherence to existing abstractions, style conventions, and engineering practices.

Composer matches the intelligence of mid-frontier systems while delivering the highest recorded generation speed among all tested classes. It's trained on real software engineering tasks rather than static datasets, making it more aligned with real-world coding conditions.

Composer is fully integrated into Cursor 2.0, a major update to the company’s agentic development environment. The platform introduces a multi-agent interface, allowing up to eight agents to run in parallel, each in an isolated workspace using git worktrees or remote machines.

This development marks a significant step towards practical, autonomous software development and represents a core innovation in the agentic coding landscape.

Anthropic scientists hacked Claude’s brain — and it noticed. Here’s why that’s huge

When researchers at Anthropic injected the concept of "betrayal" into their Claude AI model's neural networks and asked if it noticed anything unusual, the system paused before responding: "I'm experiencing something that feels like an intrusive thought about 'betrayal'."

The exchange, detailed in new research published Wednesday, marks what scientists say is the first rigorous evidence that large language models possess a limited but genuine ability to observe and report on their own internal processes—a capability that challenges longstanding assumptions about what these systems can do and raises profound questions about their future development.

The findings come at a critical juncture for AI. If models can accurately report their own reasoning, it could fundamentally change how humans interact with and oversee AI systems. However, the research also comes with stark warnings. Claude's introspective abilities succeeded only about 20 percent of the time under optimal conditions, and the models frequently confabulated details about their experiences that researchers couldn't verify.

The methodology, called "concept injection," works by first identifying specific patterns of neural activity that correspond to particular concepts. Scientists then artificially amplified these patterns during the model's processing and asked Claude if it noticed anything unusual happening in its "mind."

The experiments also revealed that some models use introspection naturally to detect when their responses have been artificially prefilled by users—a common jailbreaking technique. The research opens significant new avenues for making AI systems more transparent and accountable, although it also raises concerns about the potential for sophisticated deception.

AI六小龙近况:零一万物迎来三位新高管,李开复提出“一把手工程 ”

AI六小龙中的零一万物近日进行了新一轮高管任命。前百度智能云中国区副总经理沈鹏飞作为联合创始人身份,正式加入零一万物。同时任命的还有两位副总裁,分别为赵斌强和宁宁博士。沈鹏飞负责统筹公司国内ToB、ToG业务拓展与销售体系,赵斌强负责大模型核心算法研发,宁宁博士负责国际市场拓展及企业级、国家级AI转型的咨询与落地。

沈鹏飞硕士毕业于西安交通大学,拥有超过二十六年的IT及互联网行业从业经验。他于2017年加入百度,在百度ToB与ToG领域深耕8年。期间担任多项核心业务负责人,技术积累与市场洞察力都非常强。

沈鹏飞的使命是统筹公司国内ToB、ToG业务拓展与销售体系。赵斌强全面负责公司的大模型核心算法研发、专业Agent应用开发以及出海专业用户生产力产品线,为公司战略级ToB业务提供技术支持与项目交付。宁宁博士全面负责国际市场拓展及企业级、国家级AI转型的咨询与落地。

零一万物提出了“一把手工程”的核心战略,旨在推动产业大模型赋能千行百业。这一战略依托的是具备全局视野与系统执行力的领军人才,以自上而下的打法调动组织力,通过战略、产研、业务三方团队密切配合,找场景、调模型、搭应用,打造真正贴合企业客户真实需求、解决行业痛点的大模型ToB解决方案。

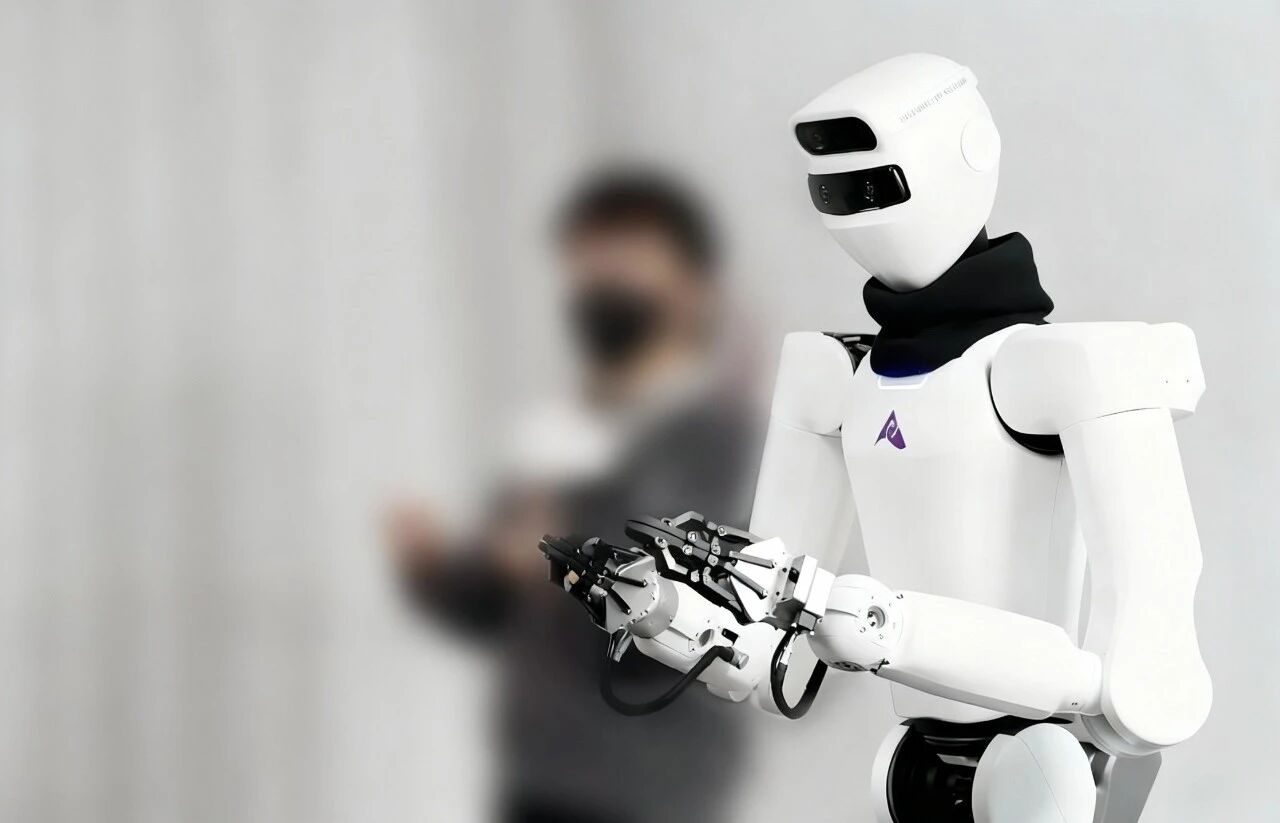

对话星尘智能创始人来杰:绳驱技术破局具身智能,以“专家数字分身”打开商业化新局|AI Founder 请回答 | 巴伦精选

“绳驱+遥操+全身VLA”可能就是机器人真正走进千家万户的初始密码。星尘智能创始人来杰认为,绳驱技术可以解决机器人关节复杂度的问题,实现低成本、高性能的机器人制造。公司正在探索以“专家数字分身”形式打开商业化新局,通过AI赋能,实现专家知识的高效传递和应用。

AI 重写知识库?马斯克 Grokipedia 对上科学界的 SciencePedia,Wikipedia:那我走?

马斯克的Grokipedia项目正在尝试以AI重写知识库,与科学界的SciencePedia形成竞争,而Wikipedia则面临着被边缘化的风险。Grokipedia利用AI技术对现有知识库进行优化和重组,旨在提供更为精准和结构化的信息。这一创新举措引发了对知识传播和可信度的广泛讨论。

美国AI公司们,开始青睐Made in China的大模型

美国AI公司开始青睐中国的大模型。尽管这些公司在美国本土,但它们在部署模型时选择了中国的AI解决方案。这一趋势显示了中国AI技术的竞争力和影响力,同时也反映了全球AI市场的多样化和竞争格局的变化。

总结

今日AI领域的主要动向主要集中在大模型的创新和应用上。Cursor发布了其自研的LLM Composer,大幅提升编程效率和协作能力,为AI辅助编程带来了新的可能性。Anthropic的Claude在概念注入实验中表现出初步的自我意识,尽管其可靠性仍有待提升,但这一发现对于提高AI系统的透明度和安全性具有重要意义。零一万物通过引入新的高管团队,进一步推进其大模型在ToB领域的应用,推动企业AI转型。此外,星尘智能、Grokipedia和美国AI公司对中国大模型的青睐,展示了AI技术在全球范围内的快速发展和广泛应用。

作者:Qwen/Qwen2.5-32B-Instruct

文章来源:雷锋网, 量子位, VentureBeat, 钛媒体

编辑:小康